Unreal Engine enriching Videosync - opening up a door to enhanced event participation experiences (XR)

Written by

February 7, 2022

6 minute read

Some of the perks of working for a company with a relatively in-your-face TEAL-culture are the WOW experiences that occasionally emerge. As teams and people are given a legitimate opportunity to try and fail, they tend to experiment with bolder than average concepts and technologies. A few weeks ago, one of these experiments blew my mind. Not just because it was a fantastic display of creativity and novel technology, but also because it opened a whole new door for virtual events. By entering the world of XR, our clients get a bunch of new tools to create amazing virtual event experiences.

Converting a low interest chat into an engaging experience

The company behind virtual and hybrid event platform Videosync, Inderes, was listed on Nasdaq Helsinki First North Stock Exchange in October 2021, which brought us some must-obey rules and regulations. These rules are not super complex, but they’re critical to follow – and unfortunately for most of us, they’re a relatively dull subject. Thus, a typical example of an internal communications issue where you need people to fully internalize something that doesn’t genuinely interest them. Apologies to all compliance professionals as I mean no offense, this is just an observation.

And the Oscar for Best Actor goes to… Tero Jokinen

The first WOW came from the concept of this internal event, and how it tried to tackle the challenge of communicating something that is unlikely to be top-of-mind for the target audience.

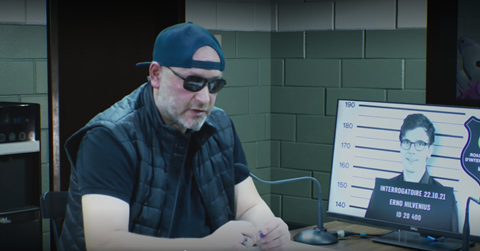

The concept in a nutshell: A member of the secret service, or Supo, as it's called here in Finland, is interviewing (roasting) the CEO of Inderes. Tero Jokinen, responsible for studio development at FLIK as his civil profession, plays the role of the agent and Mikael Rautanen, Inderes CEO in a cameo role as himself. Prior to the livestreamed event the audience, i.e., our staff, was given the opportunity to post roast questions about the IPO in different Slack groups that Mikael wasn’t on. Mikael was the only person not knowing that he will be roasted (actually, he thought that Tero would discuss some studio plans with him). The whole communication regarding the event lacked the traditional "corporate topping” and was kept very informal.

Image: Green screen background was bought from Epic’s marketplace. And Tero looking like Fred Durst (not bought)

The surprise element in this concept gave a small, but super-important flavor to the whole show – it made the situation more authentic and interesting, instead of being "people dressed up and just blasting their message". More importantly, the engagement of everyone (except Mikael) before the event, resulted in the content being actually co-created by the Inderes staff. This is different from the traditional approach where content is designed, scripted and filtered by the corporate comms and management. Finally, even if the show contained funny elements, the purpose of the event was far from comedy. The matters discussed were strictly business, even though the whole setting made the content fun and interesting to watch.

Image: Multi-camera production contained some embedded elements

Leaping frogs and flying doughnuts contain more than entertainment value

So, what made it fun? Tero probably knew that writing 45 minutes of comedy is difficult, and in corporate setup that could lead to cringeworthy tragicomedy. Instead of writing pseudo-humorous punchlines and inside jokes, the tools were given to the audience

Generally virtual events utilize chat, polls and such to engage the attendees. In this concept, the engagement was taken up a few notches with tangible emojis. The level of delivery gave me the second WOW of the experiment.

Videosync’s emoji reaction component is widely used in different types of events. In addition to engaging the audience, the reaction stats give event arrangers good insight on which parts of the content generated reactions in the audience. Instead of emojis flying over the video as per usual, this time the emoji reactions were visualized in the video itself. Clicking the frog emoji made a frog crawl on the floor, unicorns were stacked in the observation room behind the interrogation room and doughnuts were thrown by an officer standing in the background.

(quality of the GIF does not correspond to broadcast quality)

Some of you may be asking why do it, what's the point? And fair enough, this type of display of audience reactions doesn’t generate any hard value per se. But what it did for me, and some colleagues I talked to post-event, was that it made us more alert. The whole concept, together with the very surprising engagement element, took us from our corporate mode to a different space – a space where our brains were in a more relaxed state and more receptive to the content. And of course, this increased the chance of creating a more lasting memory and increased perception of the topic at hand.

Videosync and Unreal Engine for real

To be honest, I myself have been quite sceptic about the XR realizations in virtual events. Probably because the implementations have focused more on the visual world, which to me personally, as an event attendee, bring a quite limited amount of added value. The third, and the final WOW that I’d like to share, came from the realization of the different ways of how XR can be used to bring value in events through engagement.

In this experiment, the integration between Videosync and Unreal Engine was fairly simple and straightforward. Videosync’s reaction click count was passed to Unreal Engine via API, and in UE, this information was used to trigger actions in the XR background. Naturally, this requires technology skills in Unreal Engine, so the wording “simple” may be an understatement – but still, we're not talking rocket science here.

The most interesting eye-opener of it all was the possibilities that this passing of data between Videosync and UE could bring to virtual events in terms of engagement. For example: When we are taking up questions and comments from audience chat in the main feed, we could bring the commenting person's "player card", consisting of their Twitter profile image, name and profile description (if they signed into the event with Twitter), along with the comment to the video feed or we could enable audio emojis to bring the audience yet another tool to participate and express themselves.

In practice, whatever the audience does in Videosync, and whatever information they have given about themselves, could be fed to Unreal Engine for some XR magic to make the event more WOW.

The holy grail of top-notch creativity and technology in the virtual event industry

To wrap up the key takeaways in this post:

XR enables interesting possibilities for creative event concepts, and instead of just visual backdrops, the focus should be in audience engagement and creating a receptive attendee

Experimenting with internal events without corporate comms topping is good, the power of increased receptiveness may surprise you

For event agencies and production studios Videosync now has a cool way to untap the potential of XR-style audience engagement for virtual and hybrid events with Unreal Engine

While XR events are still a relatively marginal phenomenon, they are up-and-coming. To event agencies at the top of the leaderboard (as well as the challengers), XR knowhow and excellence will likely bring competitive advantage. Based on the implementations I've encountered, though, I think the focus and creativity of event agencies should be shifted from the creation of a visual space to creation of a mental space – the engagement opportunities of XR have potential to bring much more impact and value to an event than a digital backdrop and avatars.

Videosync has taken a leap into bringing more attendee value to events by deploying XR opportunities. However, we feel that there is still much to do to enable excellent audience experience and value also without it, so our main focus will continue to be on facilitating awesome "legacy"😉 virtual events.

We're happy to discuss any tech co-operation, as well as client projects in the XR area. Just drop us a line and we'll be in touch.

See Videosync

in action

Request a demo

-min.png)

Inderes Oyj

FI22776002, info@videosync.fi

Helsinki

Porkkalankatu 5, 00180 Helsinki, Finland

Stockholm

Brantingsgatan 44, 115 35, Stockholm

Part of